This article was originally featured on MIT Press.

In 2017, Google researchers introduced a novel machine-learning program called a “transformer” for processing language. While they were mostly interested in improving machine translation—the name comes from the goal of transforming one language into another—it didn’t take long for the AI community to realize that the transformer had tremendous, far-reaching potential.

Trained on vast collections of documents to predict what comes next based on preceding context, it developed an uncanny knack for the rhythm of the written word. You could start a thought, and like a friend who knows you exceptionally well, the transformer could complete your sentences. If your sequence began with a question, then the transformer would spit out an answer. Even more surprisingly, if you began describing a program, it would pick up where you left off and output that program.

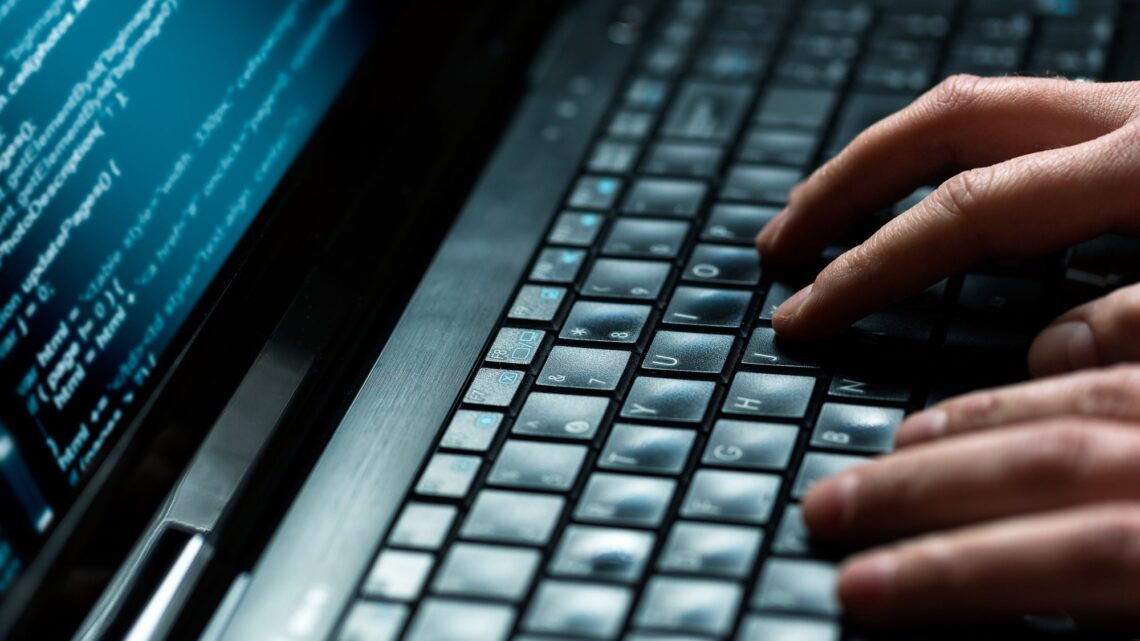

It’s long been recognized that programming is difficult, however, with its arcane notation and unforgiving attitude toward mistakes. It’s well documented that novice programmers can struggle to correctly specify even a simple task like computing a numerical average, failing more than half the time. Even professional programmers have written buggy code that has resulted in crashing spacecraft, cars, and even the internet itself.

So when it was discovered that transformer-based systems like ChatGPT could turn casual human-readable descriptions into working code, there was much reason for excitement. It’s exhilarating to think that, with the help of generative AI, anyone who can write can also write programs. Andrej Karpathy, one of the architects of the current wave of AI, declared, “The hottest new programming language is English.” With amazing advances announced seemingly daily, you’d be forgiven for believing that the era of learning to program is behind us. But while recent developments have fundamentally…

Read the full article here