Open AI CEO Sam Altman believes long-awaited nuclear fusion may be the silver bullet needed to solve artificial intelligence’s glutinous energy appetite and pave the way for an AI revolution. When that revolution does arrive, however, it might not seem quite as shocking as he once claimed.

Altman touched on AI’s growing demands earlier this week while speaking at a Bloomberg event outside of the annual World Economic Forum meeting in Davos, Switzerland. The CEO said powerful new AI models would likely require even more energy consumption than previously imagined. Solving that energy deficit, he suggested, will require a “breakthrough” in nuclear fusion.

“There’s no way to get there without a breakthrough,” Altman said at the event according to Reuters. “It motivates us to go invest more in [nuclear] fusion.”

AI’s energy problem

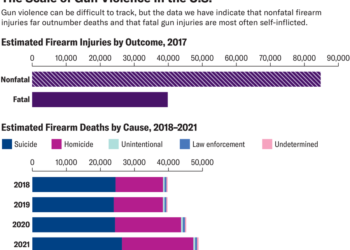

Though some AI proponents believe insights gleaned from advanced models could help fight climate change in novel ways, a growing body of research suggests the up-front energy required to train these complex models is taking a toll of its own. Experts expect the vast amounts of data needed to train models like OpenAI’s GPT and Google’s Bard could increase the global data server industry, which the International Energy Agency (IEA) estimates already accounts for around 2-3% of global greenhouse gas emissions.

Researchers estimate training a single large language model like GPT-4 could use around 300 tons of CO2. Others estimate a single image spit out by AI image generator tools like Dall-E or Stable Diffusion requires the same amount of energy as charging a smartphone. The massive server farms needed to facilitate AI training also require vast amounts of water to stay cool. GPT-3 alone, recent research suggests, may have consumed 185,000 gallons of water during its training period.

[ Related: A simple guide to the expansive world of artificial intelligence ]

Altman hopes…

Read the full article here