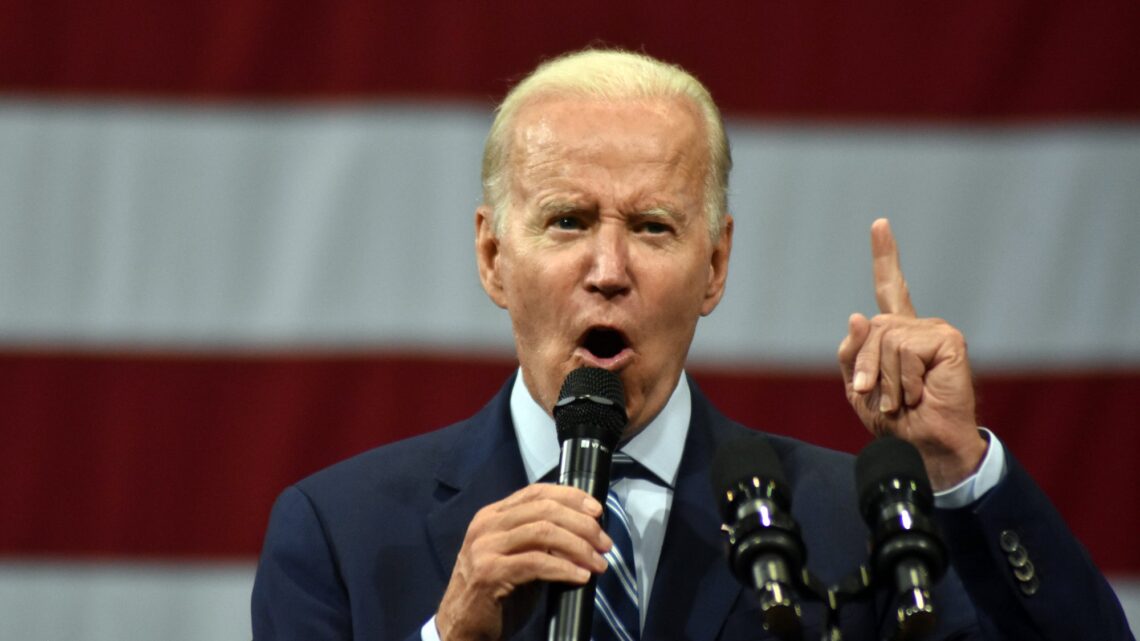

AI vocal cloning technology is reportedly already muddying the waters ahead of the 2024 election. According to a statement issued by the New Hampshire attorney general’s office on Monday, a robocall campaign deployed over the weekend used an imitation of President Joe Biden’s voice to urge recipients not to vote in the state’s January 23 presidential primary.

“Voting this Tuesday only enables the Republicans in their quest to elect Donald Trump again,” an AI-generated Biden told residents over the phone. “Your vote makes a difference in November, not this Tuesday.”

The disinformation campaign’s orchestrators are currently unknown, but it comes from “obviously somebody who wants to hurt Joe Biden,” according to former New Hampshire Democratic Party chair Kathy Sullivan, speaking through NBC News’ initial exclusive report.

[Related: Deepfake audio already fools people nearly 25 percent of the time.]

A growing problem

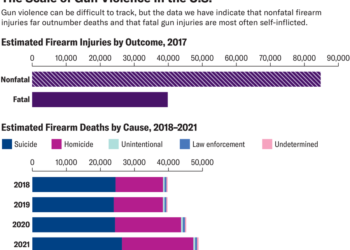

AI-generated content including deepfaked audio, video, and imagery is a growing concern among misinformation experts. Multiple reports have warned that today’s media, internet, and social landscapes are unprepared for a likely imminent deluge of falsified “fake news” content as the 2024 presidential election intensifies. A recent study conducted by researchers at the UK’s University College London indicates AI generated audio can fool as many as 1-in-4 listeners. Over 1,600 videos uploaded to YouTube have featured deepfaked celebrities like Taylor Swift and Steve Harvey hawking “medical card” schemes and other scams, collectively amassing over 195 million views in the process. But unlike “free money” ploys from AI Oprah, the latest vocal cloning example is explicitly meant to influence the US political landscape.

“Disgraceful and an unacceptable affront to democracy”

As for this weekend’s misinfo campaign, the fake Biden falsely claimed an ongoing statewide campaign to…

Read the full article here