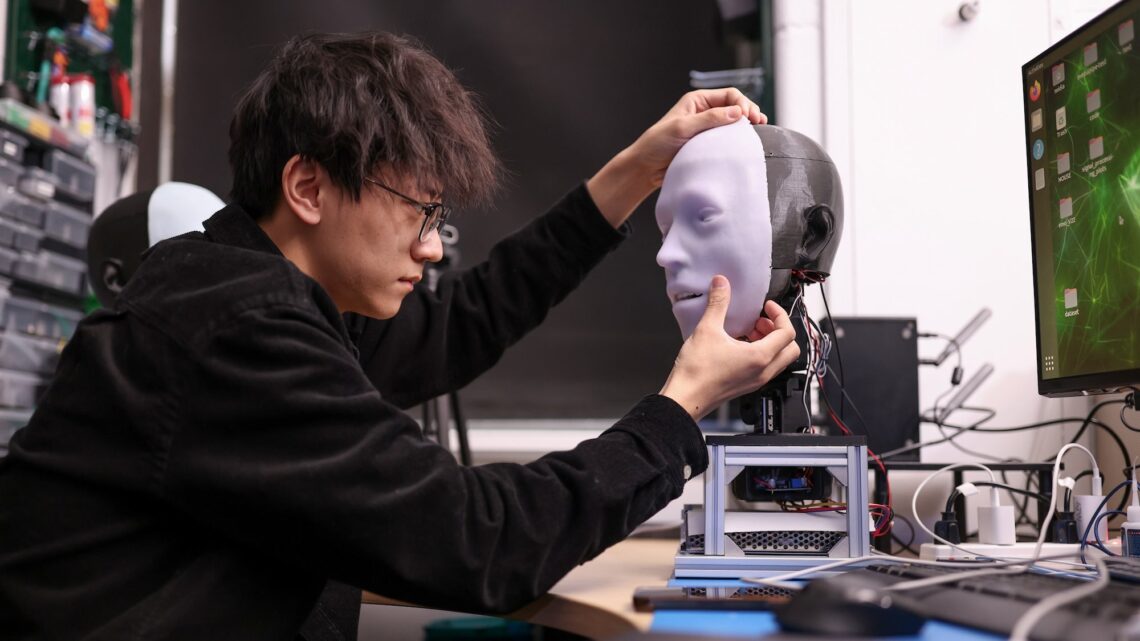

If you want your humanoid robot to realistically simulate facial expressions, it’s all about timing. And for the past five years, engineers at Columbia University’s Creative Machines Lab have been honing their robot’s reflexes down to the millisecond. Their results, detailed in a new study published in Science Robotics, are now available to see for yourself.

Meet Emo, the robot head capable of anticipating and mirroring human facial expressions, including smiles, within 840 milliseconds. But whether or not you’ll be left smiling at the end of the demonstration video remains to be seen.

AI is getting pretty good at mimicking human conversations—heavy emphasis on “mimicking.” But when it comes to visibly approximating emotions, their physical robots counterparts still have a lot of catching up to do. A machine misjudging when to smile isn’t just awkward–it draws attention to its artificiality.

Human brains, in comparison, are incredibly adept at interpreting huge amounts of visual cues in real-time, and then responding accordingly with various facial movements. Apart from making it extremely difficult to teach AI-powered robots the nuances of expression, it’s also hard to build a mechanical face capable of realistic muscle movements that don’t veer into the uncanny.

[Related: Please think twice before letting AI scan your penis for STIs.]

Emo’s creators attempt to solve some of these issues, or at the very least, help narrow the gap between human and robot expressivity. To construct their new bot, a team led by AI and robotics expert Hod Lipson first designed a realistic robotic human head that includes 26 separate actuators to enable tiny facial expression features. Each of Emo’s pupils also contained high-resolution cameras to follow the eyes of its human conversation partner—another important, nonverbal visual cue for people. Finally,…

Read the full article here