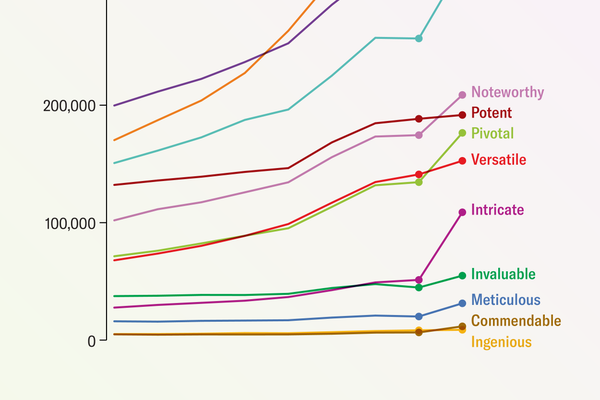

AI Chatbots Have Thoroughly Infiltrated Scientific Publishing

One percent of scientific articles published in 2023 showed signs of generative AI’s potential involvement, according to a recent analysis

Amanda Montañez; Source: Andrew Gray

Researchers are misusing ChatGPT and other artificial intelligence chatbots to produce scientific literature. At least, that’s a new fear that some scientists have raised, citing a stark rise in suspicious AI shibboleths showing up in published papers.

Some of these tells—such as the inadvertent inclusion of “certainly, here is a possible introduction for your topic” in a recent paper in Surfaces and Interfaces, a journal published by Elsevier—are reasonably obvious evidence that a scientist used an AI chatbot known as a large language model (LLM). But “that’s probably only the tip of the iceberg,” says scientific integrity consultant Elisabeth Bik. (A representative of Elsevier told Scientific American that the publisher regrets the situation and is investigating how it could have “slipped through” the manuscript evaluation process.) In most other cases AI involvement isn’t as clear-cut, and automated AI text detectors are unreliable tools for analyzing a paper.

Researchers from several fields have, however, identified a few key words and phrases (such as “complex and multifaceted”) that tend to appear more often in AI-generated sentences than in typical human writing. “When you’ve looked at this stuff long enough, you get a feel for the style,” says Andrew Gray, a librarian and researcher at University College London.

On supporting science journalism

If you’re enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

LLMs are designed to generate text—but what they produce may or may not be factually…

Read the full article here