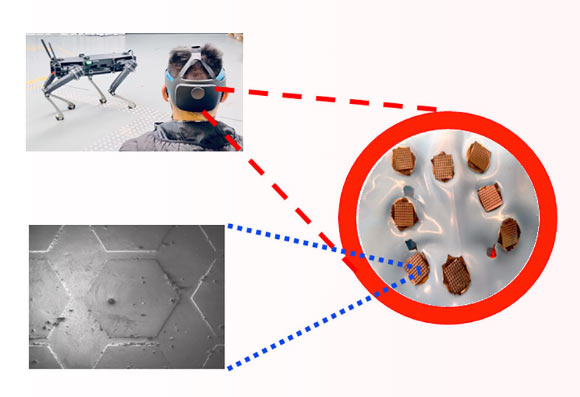

Using their non-invasive sensors, Professor Francesca Iacopi from the University of Technology Sydney and her colleagues have demonstrated hands-free communication with a quadruped robot through brain activity.

Brain-machine interfaces are hands-free and voice-command-free communication systems that allow an individual to operate external devices through brain waves, with vast potential for future robotics, bionic prosthetics, neurogaming, electronics, and autonomous vehicles.

Such systems typically consist of three modules: an external sensory stimulus, a sensing interface, and a neural signal processing unit.

Among these, the sensing interface plays a crucial part by detecting the cortical electrical activity, which encodes human intent (brain waves at a frequency of 1-150 Hz), through either implanted or wearable neural sensors, such as electroencephalography electrodes.

Non-invasive sensors are often preferred when no severe disabilities are involved.

“The hands-free, voice-free technology works outside laboratory settings, anytime, anywhere,” Professor Iacopi said.

“It makes interfaces such as consoles, keyboards, touchscreens and hand-gesture recognition redundant.”

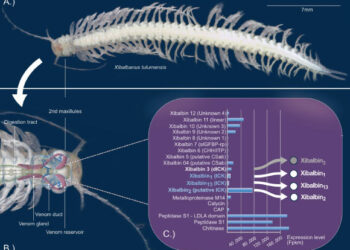

“By using cutting edge graphene material, combined with silicon, we were able to overcome issues of corrosion, durability and skin contact resistance, to develop the wearable dry sensors.”

The graphene sensors developed by Professor Iacopi and co-authors are very conductive, easy to use and robust.

The hexagon patterned sensors are positioned over the back of the scalp, to detect brain waves from the visual cortex.

The sensors are resilient to harsh conditions so they can be used in extreme operating environments.

The user wears a head-mounted augmented reality lens which displays white flickering squares.

By concentrating on a particular square, the brain waves of the operator are picked up by the biosensor, and a decoder translates the signal into commands.

The technology was…

Read the full article here