On Wednesday, Google announced the arrival of Gemini, its new multimodal large language model built from the ground up by the company’s AI division, DeepMind. Among its many functions, Gemini will underpin Google Bard, which has previously struggled to emerge from the shadow of its chatbot forerunner, OpenAI’s ChatGPT.

According to a December 6 blog post from Google CEO Sundar Pichai and DeepMind co-founder and CEO Demis Hassabis, there are technically three versions of the LLM—Gemini Ultra, Pro, and Nano—meant for various applications. A “fine tuned” Gemini Pro now underpins Bard, while the Nano variant will be seen in products such as Pixel Pro smartphones. The Gemini variants will also arrive for Google Search, Ads, and Chrome in the coming months, although public access to Ultra will not become available until 2024.

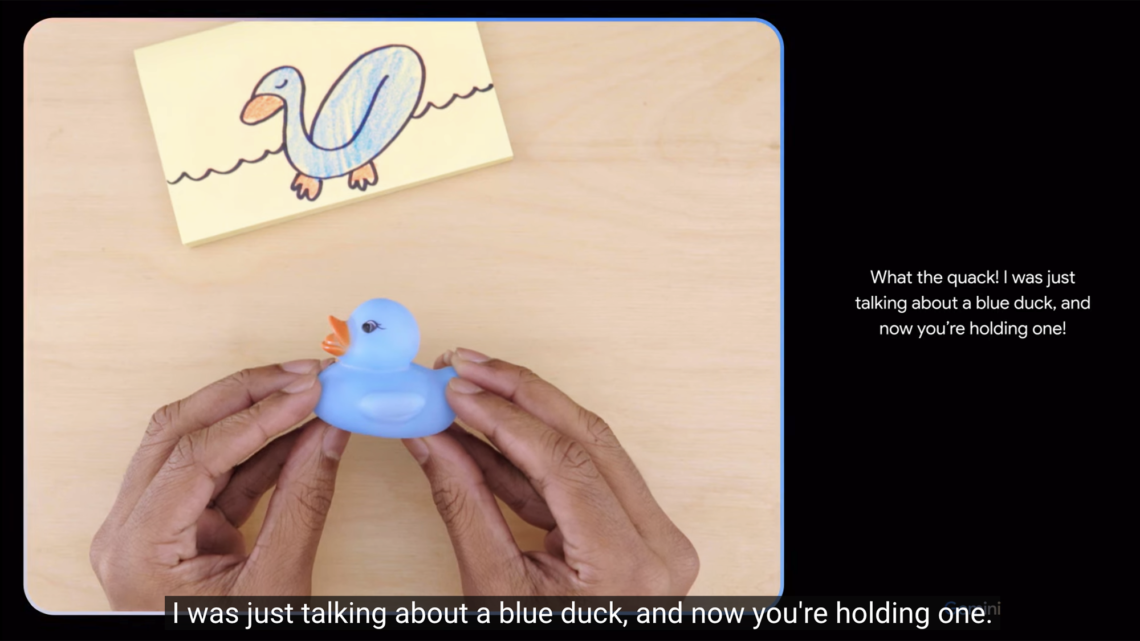

Unlike many of its AI competitors, Gemini was trained to be “multimodal” from launch, meaning it can already handle both text, audio, and image-based prompts. In an accompanying video demonstration, Gemini is verbally tasked to identify what is placed in front of it (a piece of paper) and then correctly identifies a user’s sketch of a duck in real-time. Other abilities appear to include inferring what actions happen next in videos once they are paused, generating music based on visual prompts, and assessing children’s homework—often with a slightly cheeky, pun-prone personality. It’s worth noting, however, that the video description includes the disclaimer, “For the purposes of this demo, latency has been reduced and Gemini outputs have been shortened for brevity.”

Gemini’s accompanying technical report indicates the LLM’s most powerful iteration, Ultra, “exceeds current state-of-the-art results on 30 of the 32 widely-used academic benchmarks used in [LLM] research and development.” That said,…

Read the full article here