In a nondescript warehouse on Google’s sprawling campus in Mountain View, California, sit about a dozen cameras of various shapes and sizes. Some are attached to 500-pound rigs, others to what look like proton packs from Ghostbusters. There’s also a bike, a snowmobile and a car.

These cameras showcase both the evolution of Google’s Street View camera technology and all the methods the search giant uses to capture imagery all over the Earth, from city blocks to open fields to mountain tops. There’s one camera at the very end of the row that, at first glance, looks like an unassuming computer screen. But below it sit four lenses that will capture 3D aerial imagery once the apparatus is mounted to an airplane.

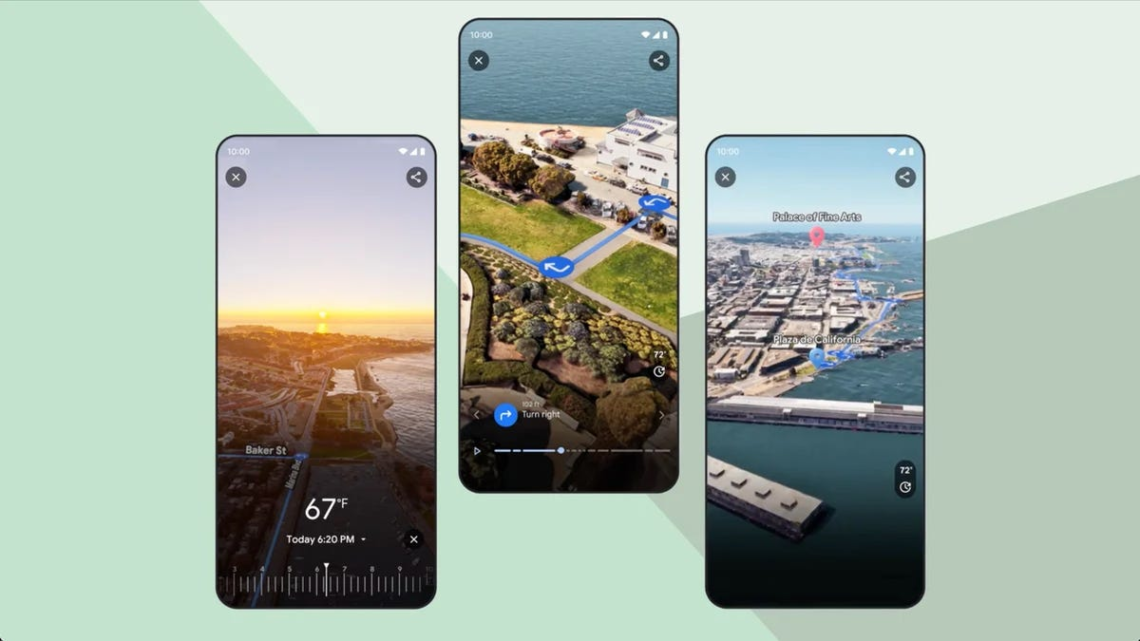

This camera is used to shoot footage for Google Maps’ latest feature, called Immersive View, which shows a three-dimensional rendering of your destination including buildings, cars, trees and even birds. You can move a time slider to see what the weather will look like in an hour, or how many cars will be on the road when you’re driving home from work. And instead of seeing just a red line to symbolize traffic, as you would in the standard Maps view, you’ll see images of individual cars backed up to really paint the picture.

There are two versions of Immersive View: One that lets you explore places like landmarks and parks, which is already available, and another for previewing routes. The latter is rolling out, starting in cities like San Francisco, New York and London. This option lets you glide along your route to see where every turn will be and what your surroundings will look like each step of the way.

Even though Immersive View looks life-like, it doesn’t use real-time images. Google creates these digital models by combining footage captured for Street View with images taken with its 3D aerial camera, which is similar to ones used for Hollywood films. AI and computer vision help to align all the imagery and identify objects like street signs,…

Read the full article here